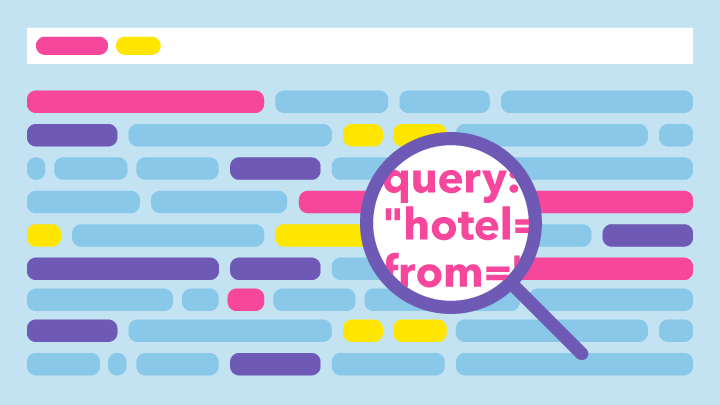

This article is written for individuals in data science or analytics roles who are familiar with terms such as confidence interval, databases, or workflows. It is aimed at those who need to implement anomaly detection techniques for various types of users with different needs. In this article, I will share my experience from working at trivago, with a specific focus on internet traffic. Rather than delving into the details of mathematical models (as there are already many well-covered articles on this topic), I aim to provide insights into real-world situations encompassing a wide range of business needs. These situations require tailored solutions to cater to different types of stakeholders.

Follow us on